A Behind-the-Scenes Look at Writing at and Running MacStories→

Earlier today, Kerry Provenzano released the latest episode of Paper Places, a Relay show that features interviews with writers about writing. This month, Kerry interviewed me all about how I got into tech writing, running MacStories, and my writing process. It was a lot of fun, and covered a lot of ground that I haven’t talked about much elsewhere.

Claude Code Remote Control Tips

Claude Code Remote Control Tips

This Week on MacStories Podcasts

This Week on MacStories Podcasts

Previously, On MacStories

Previously, On MacStories

App Debuts

App Debuts

Interesting Links

Interesting Links

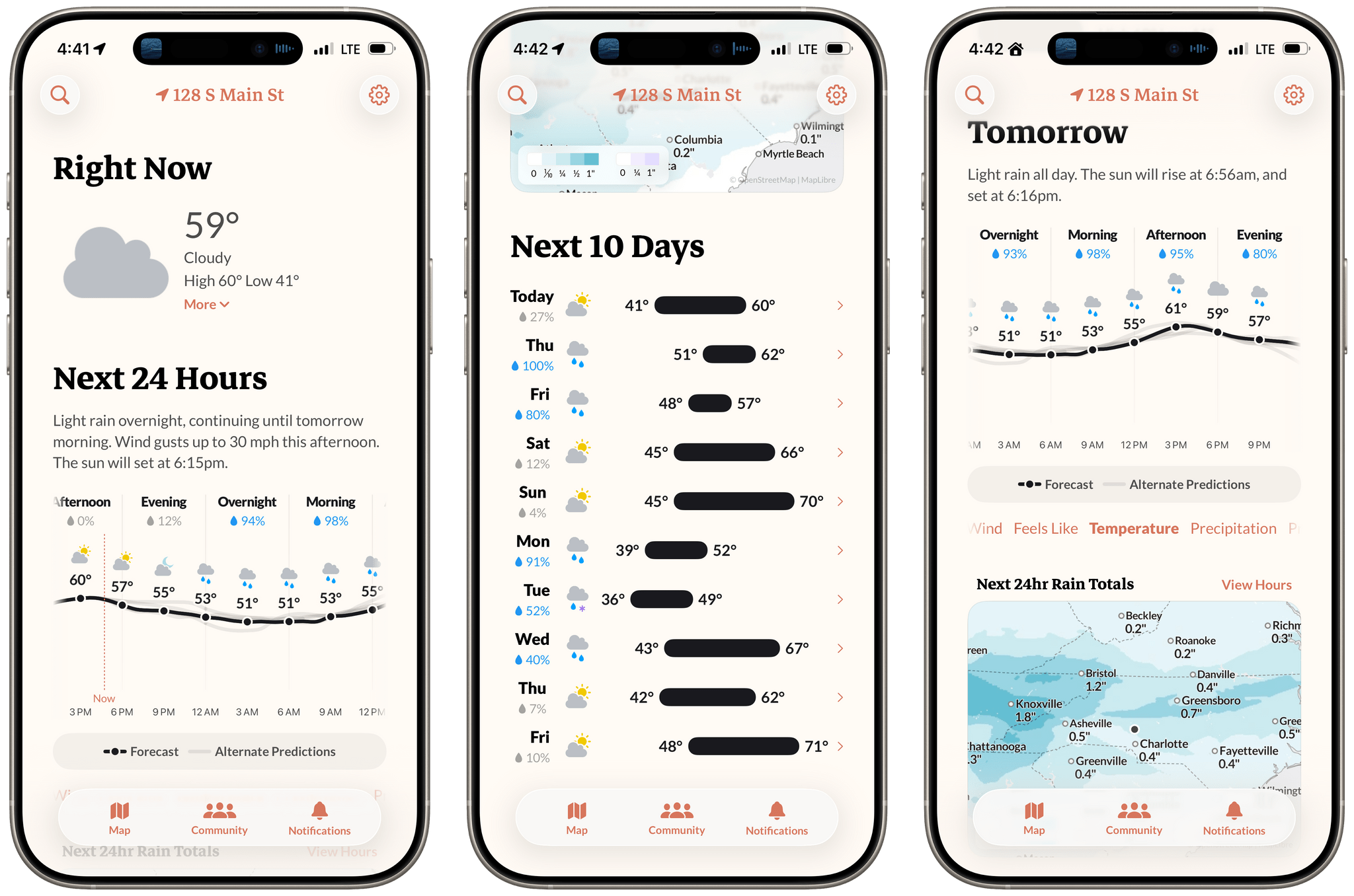

Acme Weather: A Fresh Take on Forecast Uncertainty

Earlier this week, the founders of Dark Sky made their post-Apple debut with a new weather app for the iPhone and Apple Watch: Acme Weather. It’s a terrific 1.0 with all the details you’d expect, plus a few interesting features that set it apart from other apps in its category.

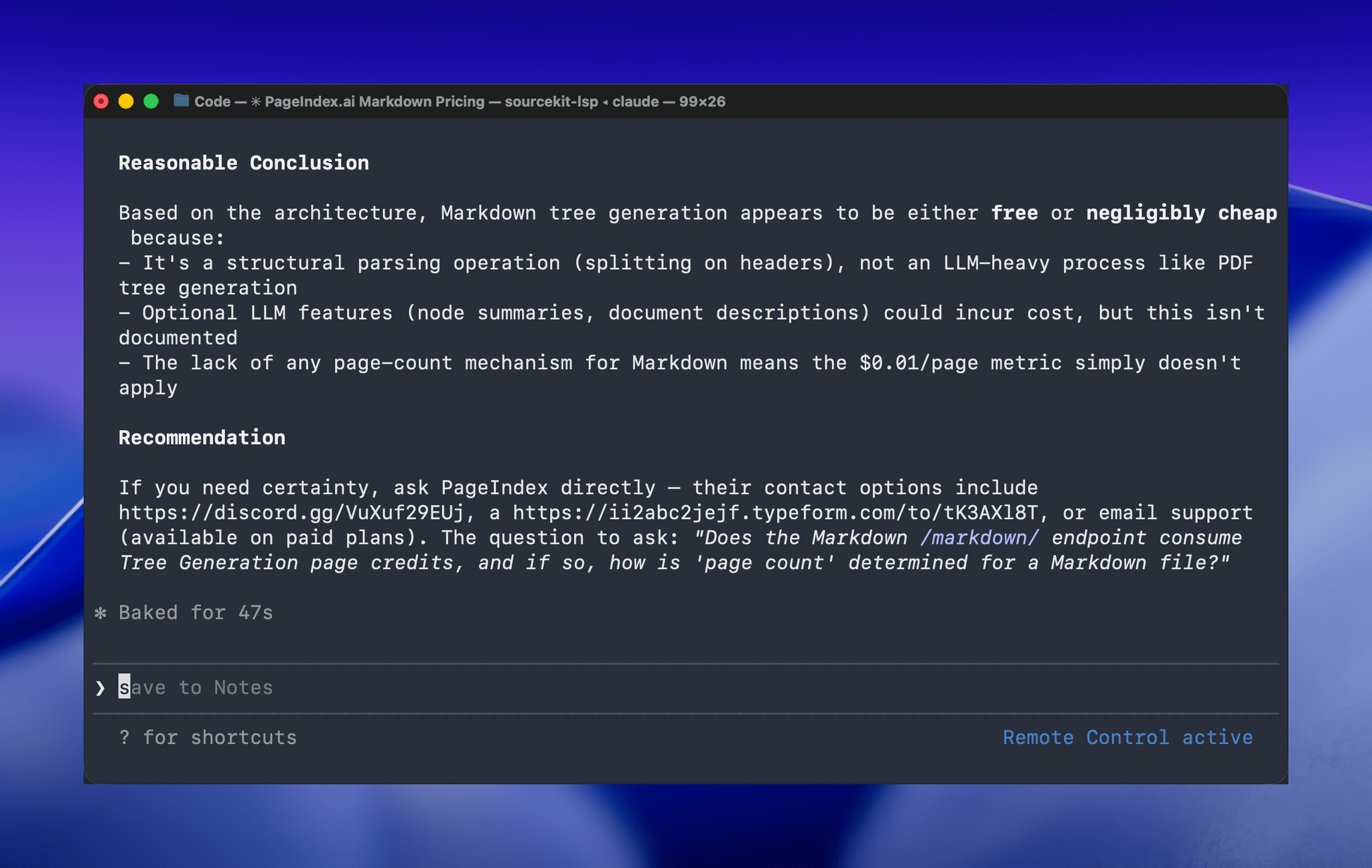

Hands-On with Claude Code Remote Control

One of the greatest frustrations I’ve had with Claude Code is feeling tied to my desk or being stuck in a macOS Screen Sharing window. Claude Code’s new Remote Control feature, which was introduced late yesterday, promises to eliminate that frustration entirely. Here’s how it works.