Astute take by Ben Thompson on how Amazon is building an operating system for the home with Alexa:

Amazon seized the opportunity: first, Alexa was remarkably proficient from day one, particularly in terms of speed and accuracy (two factors that are far more important in encouraging regular use than the ability to answer trivia questions). Then, the company moved quickly to build out its ecosystem in two directions:

- First, the company created a simple “Skills” framework that allowed smart devices to connect to Alexa and be controlled through a relatively strict verbal framework; in a vacuum it was less elegant than, say, Siri’s attempt to interpret natural language, but it was far simpler to implement. The payoff was already obvious at last year’s CES: Alexa support was everywhere.

- Secondly, “Alexa” and “Echo” are different names because they are different products: Alexa is the voice assistant, and much like AWS and Amazon.com, Echo is Alexa’s first customer, but hardly its only one. This year CES announcements are dominated by products that run Alexa, including direct Echo competitors, lamps, set-top boxes, TVs, and more.

“Works with Alexa” sure feels like this year’s CES motto (I try not to pay too much attention to CES announcements, but the underlying trends are interesting).

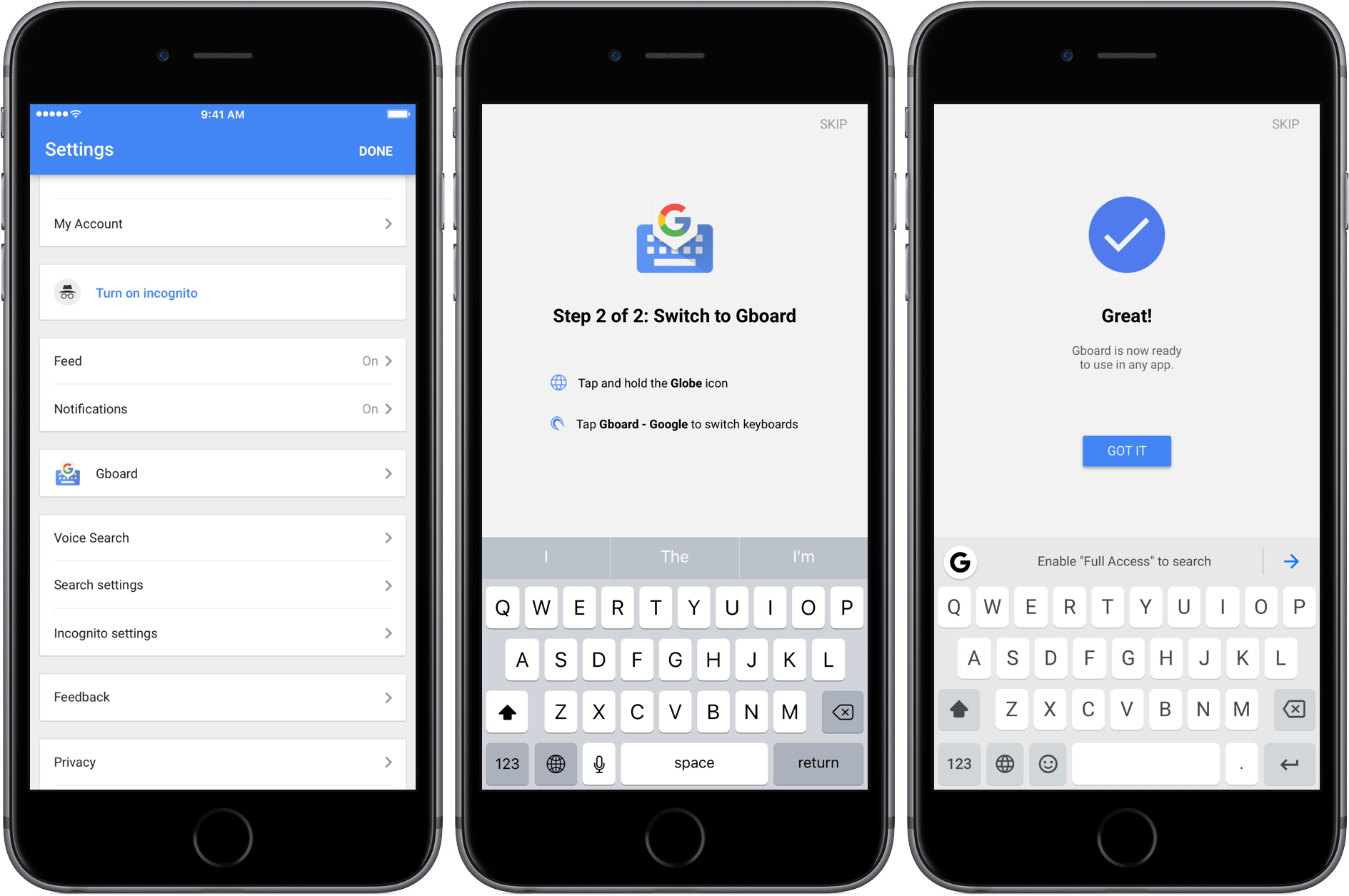

I use both HomeKit/Siri and Alexa. There are advantages and problems to both ecosystems: Apple’s approach is slower, perhaps more careful, and Siri works internationally; Alexa and the Echo are only available in a few countries, but the experience is leaner, generally faster, and there are dozens of compatible devices and skills launching every week. It’s a complicated comparison: Alexa works with web services while Siri integrates with native apps and hardware (like Touch ID); Alexa is expanding to a variety of accessories and third-party services, but Siri and HomeKit are more directly tied into your iOS devices.

I expect Apple to continue opening up SiriKit to developers to match Amazon’s rich ecosystem of skills, but even with more domains and apps, I think the idea of a dedicated assistant for the home is a winning one. On the other hand, I wonder how quickly Amazon can launch Alexa/Echo in other countries and build richer conversational experiences that go beyond simple commands. This will be fun to watch.