Beautiful, poignant story by Steven Zeitchik, writing for The Hollywood Reporter, on the magic of going to an Oasis concert in 2025.

It would have been weird back in Oasis’ heyday to talk about a big stadium-rock show being uniquely “human” — what the hell else could it be? But after decades of music chosen by algorithm, of the spirit of listen-together radio fracturing into a million personalized streams, of social media and the politics that fuel it ordering acts into groups of the allowed and prohibited, of autotuning and overdubbing washing out raw instruments, of our current cultural era’s spell of phone-zombification, of the communal spaces of record stores disbanded as a mainstream notion of gathering, well, it’s not such a given anymore. Thousands of people convening under the sky to hear a few talented fellow humans break their backs with a bunch of instruments, that oldest of entertainment constructs, now also feels like a radical one.

And:

The Gallaghers seemed to be coming just in time, to remind us of what it was like before — to issue a gentle caveat, by the power of positive suggestion, that we should think twice before plunging further into the abyss. To warn that human-made art is fragile and too easily undone — in fact in their case for 16 years it was undone — by its embodiments acting too much like petty, well, humans. And the true feat, the band was saying triumphantly Sunday, is that there is a way to hold it together.

I make no secret of the fact that Oasis are my favorite band of all time which, very simply, defined my teenage years. They’re responsible for some of my most cherished memories with my friends, enjoying music together.

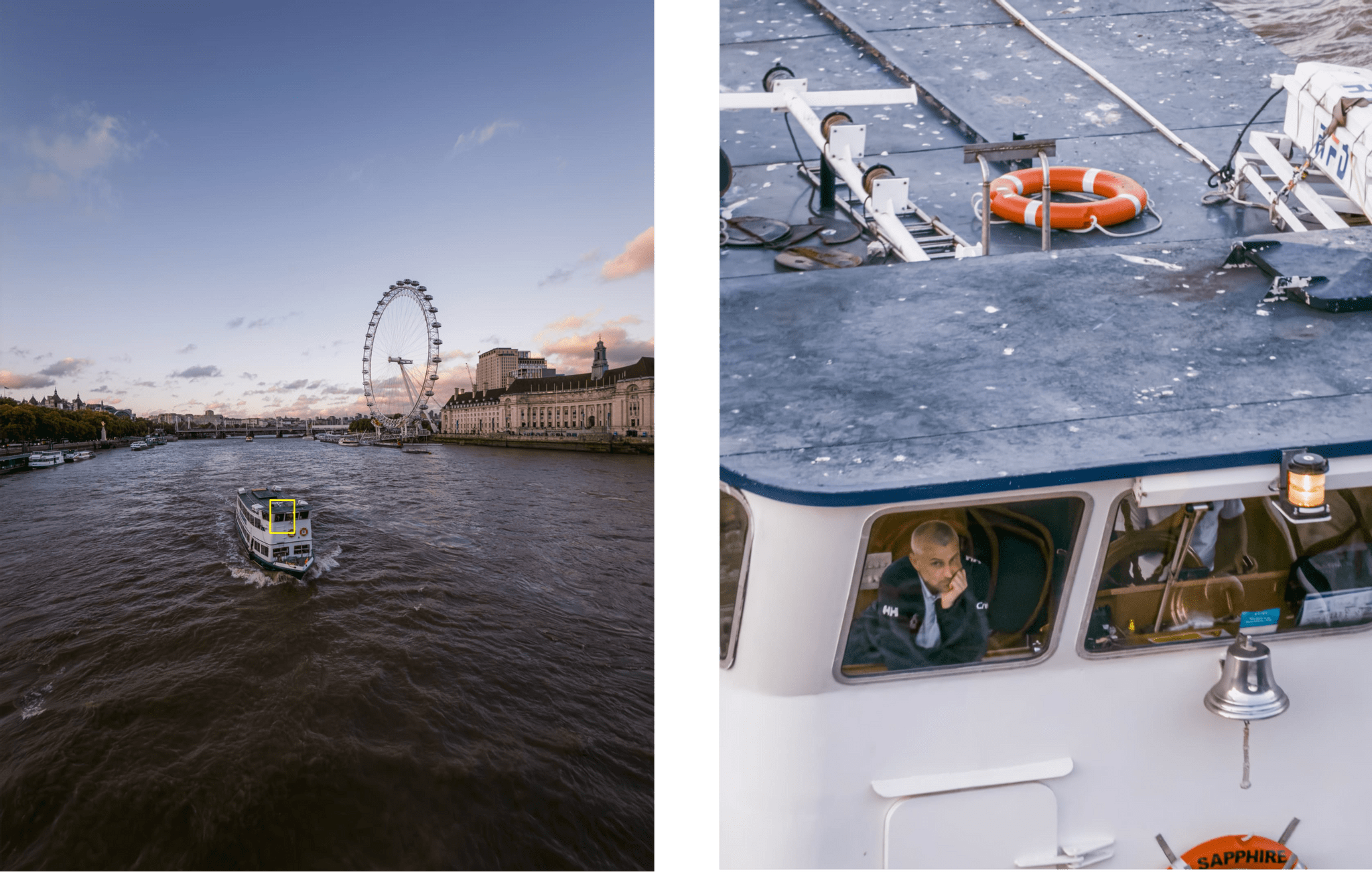

I was lucky enough to be able to see Oasis in London this summer. To be honest with you, we didn’t have great seats. But what I’ll remember from that night won’t necessarily be the view (eh) or the audio quality at Wembley (surprisingly great). I’ll remember the sheer joy of shouting Live Forever with Silvia next to me. I’ll remember doing the Poznan with Jeremy and two guys next to us who just went for it because Liam asked to hug the stranger next to you. I’ll remember the thrill of witnessing Oasis walk back on stage after 16 years with 80,000 other people feeling the same thing as me, right there and then.

This story by Zeitchik hit me not only because it’s Oasis, but because I’ve always believed in the power of music recommendations that come from other humans – not algorithms – who would like you to also enjoy something. And to do so together.

If only for two hours one summer night in a stadium, there’s beauty to losing your voice to music not delivered by an algorithm.

.](https://cdn.macstories.net/dallas-taylor-1757510864489.png)